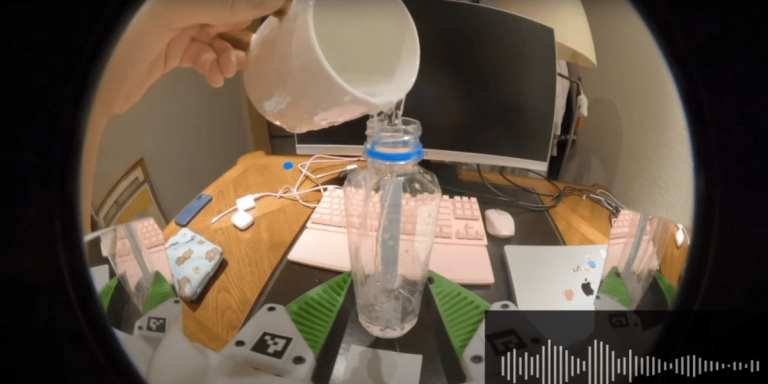

Researchers on the Robotics and Embodied AI Lab at Stanford College got down to change that. They first constructed a system for amassing audio knowledge, consisting of a gripper with a microphone designed to filter out background noise, and a GoPro digital camera. Human demonstrators used the gripper for a wide range of family duties, then used this knowledge to coach robotic arms find out how to execute the duty on their very own. The workforce’s new coaching algorithms assist robots collect clues from audio indicators to carry out extra successfully.

“So far, robots have been coaching on movies which might be muted,” says Zeyi Liu, a PhD pupil at Stanford and lead creator of the study. “However there’s a lot useful knowledge in audio.”

To check how far more profitable a robotic may be if it’s able to “listening”, the researchers selected 4 duties: flipping a bagel in a pan, erasing a whiteboard, placing two velcro strips collectively, and pouring cube out of a cup. In every activity, sounds present clues that cameras or tactile sensors wrestle with, like realizing if the eraser is correctly contacting the whiteboard, or if the cup comprises cube or not.

After demonstrating every activity a pair hundred instances, the workforce in contrast the success charges of coaching with audio versus solely coaching with imaginative and prescient. The outcomes, printed in a paper on arXiv which has not been peer-reviewed, had been promising. When utilizing imaginative and prescient alone within the cube check, the robotic might solely inform 27% of the time if there have been cube within the cup, however that rose to 94% when sound was included.

It isn’t the primary time audio has been used to coach robots, Liu says, nevertheless it’s an enormous step towards doing so at scale. “We’re making it simpler to make use of audio collected ‘within the wild,’ quite than being restricted to amassing it within the lab, which is extra time-consuming.”

The analysis indicators that audio may turn out to be a extra sought-after knowledge supply within the race to train robots with AI. Researchers are educating robots faster than ever earlier than utilizing imitation studying, exhibiting them a whole lot of examples of duties being finished as an alternative of hand-coding every activity. If audio could possibly be collected at scale utilizing units just like the one within the examine, it might present a wholly new “sense” to robots, serving to them extra shortly adapt to environments the place visibility is restricted or not helpful.

“It’s secure to say that audio is probably the most understudied modality for sensing” in robots, says Dmitry Berenson, affiliate professor of robotics on the College of Michigan, who was not concerned within the examine. That’s as a result of the majority of robotics analysis on manipulating objects has been for industrial pick-and-place duties, like sorting objects into bins. These duties don’t profit a lot from sound, as an alternative counting on tactile or visible sensors. However, as robots broaden into duties in properties, kitchens, and different environments, audio will turn out to be more and more helpful, Berenson says.

Contemplate a robotic looking for which bag comprises a set of keys, all with restricted visibility. “Possibly even earlier than you contact the keys, you hear them type of jangling,” Berenson says. “That is a cue that the keys are in that pocket, as an alternative of others.”

Nonetheless, audio has limits. The workforce factors out sound received’t be as helpful with so-called comfortable or versatile objects like garments, which don’t create as a lot usable audio. The robots additionally struggled with filtering out the audio of their very own motor noises throughout duties, since that noise was not current within the coaching knowledge produced by people. To repair it, the researchers wanted so as to add robotic sounds–whirs, hums and actuator noises–into the coaching units so the robots might be taught to tune them out.

The subsequent step, Liu says, is to see how a lot better the fashions can get with extra knowledge, which might imply extra microphones, amassing spatial audio, and including microphones to different forms of data-collection units.